Backpropagation in Neural Networks: Complete Intuition, Math, and Step-by-Step Explanation

- Aryan

- Nov 24, 2025

- 10 min read

What is Backpropagation?

Backpropagation is the backbone algorithm used to train neural networks. To understand it, let’s look at a practical scenario.

Suppose we are working with a student dataset where we have input features like CGPA and IQ, and we need to predict the LPA (salary package).

The Data:

cgpa | iq | lpa |

8 | 80 | 8 |

7 | 70 | 7 |

To solve this, we design a neural network architecture. In any neural network, the core components are weights (w) and biases (b).

When we say we are "training" a network, we are essentially trying to find the correct values for these weights and biases. For any given dataset, Backpropagation finds the optimum values so that the network produces the best possible output.

How does it work?

It follows a specific series of steps. Let's look at a slightly larger set of student data for this walkthrough:

iq | cgpa | lpa |

80 | 8 | 3 |

60 | 9 | 5 |

70 | 5 | 8 |

120 | 7 | 11 |

We have four students. For this example, assume the activation function in our nodes is linear.

The Step-by-Step Process

Initialization

First, we initialize the weights and biases. While these are typically initialized with random values, for the sake of this calculation, let's assume we start with weights (w) at 1 and biases (b) at 0.

Forward Propagation

We select a single point (one student's row) and feed that data into the neural network. The network calculates a prediction using the initial weights.

Since our weights are random (or arbitrary), the initial prediction will likely be way off.

Why? Because the current weights and biases are not yet correct.

Calculate the Loss

We need to measure how wrong we are. We choose a loss function—let's use MSE (Mean Squared Error).

We calculate the loss by comparing our predicted value against the true value.

Note: We cannot change the "True Label" (the actual reality), but we can change our prediction by adjusting the weights and biases.

Backward Propagation (The Update)

This is where the magic happens. We update the weights and biases using Gradient Descent. The goal is to minimize the error. To do this, we apply the update rule:

Here, η is the learning rate.

The Mathematical Intuition

Why do we find the derivative?

In calculus, the derivative (dy/dx) tells us how a small change in x creates a change in y.

In our context, finding the loss with respect to weights (∂L/∂w) tells us: "If we slightly change the weight, how much will the loss change?"

Changing the weights changes the predicted output (ŷ), which in turn changes the Loss value. Since we can't find the relationship between Loss and Weights directly, we use the Chain Rule of differentiation.

We want to calculate the gradient for our 9 trainable parameters (based on a standard 2-input, 2-hidden, 1-output architecture):

The Derivatives using Chain Rule:

We break it down:

First, the derivative of the Loss function with respect to the prediction:

Next, the derivative of the prediction with respect to the weight (assuming linear activation):

Combining them gives us the gradients for the output layer :

Similarly, we propagate back to find derivatives for the hidden layer :

In a Nutshell

Once we have these formulas, we can easily code the algorithm.

The process basically runs in a loop:

Initialize weights and biases.

Loop through the number of points (epochs).

For every student, compute the prediction (Forward Propagation).

Compute the Loss.

Backpropagate to find the gradients and update the weights and biases.

We repeat this multiple times (epochs) until the loss minimizes and the model converges. This entire cycle of forward prediction and backward adjustment is what we call Backpropagation.

Backpropagation in Deep Networks: The "Branching" Problem

we looked at a simple network. But what happens when we add depth?

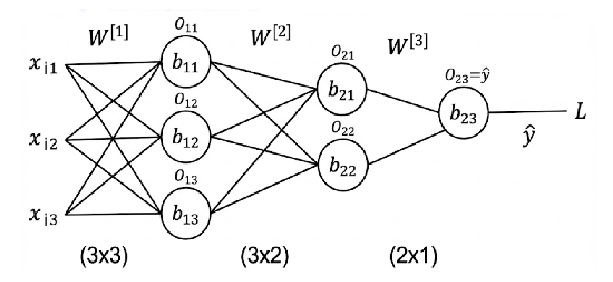

Suppose we introduce a second hidden layer. Our architecture now looks like this:

Input Layer → Hidden Layer 1 → Hidden Layer 2 → Output Layer

In this scenario (let's say a 3x3x2x1 architecture), we might have roughly 23 trainable parameters. Calculating the derivatives here introduces a new challenge: Complexity due to branching paths.

The Chain Rule with Multiple Paths

When we calculate the gradient for the weights close to the output (like w₁₁³), it is straightforward because there is a direct path to the Loss (L).

However, the deeper we go into the network (moving backward toward the input), the trickier it gets.

Consider a weight in the first layer, w₁₁¹.

This weight affects the node O₁₁ (in Hidden Layer 1).

O₁₁ is connected to both O₂₁ and O₂₂ (in Hidden Layer 2).

Both O₂₁ and O₂₂ eventually contribute to the final prediction ŷ.

The Problem:

When we change w₁₁¹, the effect "splits" and travels through two different paths (O₂₁ and O₂₂) before recombining at the output. To find the correct derivative, we must account for both paths.

The Math: Multivariate Chain Rule

In calculus, if a variable x influences a function h through two intermediate variables f(x) and g(x), we calculate the derivative by summing the gradients of all paths:

Applying this logic to our neural network, the gradient for our first-layer weight w₁₁¹ becomes a sum of the gradients flowing through both nodes of the second hidden layer:

The Solution: Memoization

As you can see, the formula above is already becoming messy with just two hidden layers. Imagine a Deep Neural Network with 50 or 100 layers. If we recalculated the derivative from scratch for every single weight, the computational cost would be astronomical because we would be repeatedly solving the same derivatives over and over again.

This is where Memoization saves the day.

Memoization is a programming technique where we store the results of expensive function calls and return the cached result when the same inputs occur again.

How it works in Backpropagation:

We calculate the gradients starting from the Output Layer (moving backward).

When we compute the gradient for Layer 3, we store (cache) that value.

When we move back to Layer 2, we don't recalculate the Layer 3 logic. We simply "fetch" the stored value we just calculated.

By reusing these values, we reduce the time complexity significantly.

This leads us to the true definition of the algorithm. Backpropagation is not just finding derivatives; it is a smart combination of two mathematical/computational concepts:

Backpropagation = Chain Rule (for logic) + Memoization (for efficiency)

This combination allows us to train massive Deep Learning models efficiently, turning what would be an impossible calculation into a manageable iterative process.

The "Why" Behind Backpropagation

To truly master Backpropagation, we have to look beyond the algorithm and understand the mathematical engine driving it. It boils down to three core concepts: The Loss Function, The Gradient, and The Minima.

1. The Loss Function: The Function of Parameters

First, we need to clarify what we are actually differentiating.

In standard algebra, we usually write y = f(x), meaning y changes as x changes.

However, in Neural Network training, the setup is different.

The Loss Function (L) depends on the predicted output (ŷ). The prediction (ŷ), in turn, comes from the Inputs, Weights, and Biases.

Inputs (X): These are fixed. We cannot change the dataset.

Weights (W) & Biases (B): These are variable. These are the "knobs" we can turn.

Therefore, the Loss Function is essentially a function of the trainable parameters:

L = f(weights, biases)

Our goal is simple: Tune these weights and biases to find the configuration where the Loss is at its absolute minimum.

2. Derivative vs. Gradient

People often use these words interchangeably, but there is a subtle, important difference.

The Derivative (Single Variable)

If a function depends on only one variable, we calculate a derivative. It is denoted by d.

Example: y = x² + x

Differentiation: dy/dx = 2x + 1

The Gradient (Multi-Variable)

When a function depends on more than one variable (like our Loss Function, which depends on thousands of weights), we cannot just find "the" derivative. We have to find the derivative with respect to each variable individually. This is called Partial Differentiation, denoted by ∂.

Example: z = f(x, y) = x² + y²

Partial regarding x: ∂z/∂x = 2x

Partial regarding y: ∂z/∂y = 2y

The Definition: A Gradient is simply a vector (a collection) of all these partial derivatives. Since Neural Networks live in high-dimensional space (3D and beyond), we calculate Gradients, not just simple derivatives.

3. Intuition: Rate of Change

What does a value like dy/dx = 11 actually mean?

It represents the sensitivity of the function. It asks: "If I nudge x slightly, how much will y respond?"

Let's verify the math from our earlier example (y = x² + x):

Find the derivative: dy/dx = 2x + 1

Calculate at point x=5:

2(5) + 1 = 11

Interpretation: At the exact moment when x is 5, the rate of change is 11. If we increase x by a tiny amount, y will increase by roughly 11 times that amount. This tells us the direction and magnitude required to update our weights.

4. Finding the Minima (The End Goal)

Why do we care about these derivatives? Because of the concept of Minima.

In calculus, to find the lowest point of a curve (the minima), we look for the spot where the slope (derivative) is zero.

Single Parameter: If y = x², then dy/dx = 2x. Setting 2x = 0 gives us x = 0. This is where the curve bottoms out.

Multiple Parameters: We do the exact same thing, but for every single weight. If z = x² + y², we set both gradients to zero:

2x = 0 ⟹ x = 0

2y = 0 ⟹ y = 0

The minima lies at (0,0).

In Summary:

Backpropagation uses gradients to find the direction of the "steepest slope." Gradient Descent then pushes the weights toward the point where the gradient becomes zero—the point of Minimum Loss.

The Intuition: Why the Update Rule Works

We know the formula, but why is it structured this way? Let's break down the Update Rule:

To make this easy to visualize, let's assume our Learning Rate (η) is 1 for a moment. The formula simplifies to:

1. The "Magic" of the Negative Sign

Imagine we are training a network with multiple weights, but we focus on just one bias, b₂₁.

Our Loss function behaves like a valley (a parabola). We want to reach the bottom (the minima), where the Loss is lowest.

The derivative (∂L/∂b) gives us the slope. The update rule relies on subtracting this slope. Here is why that sign is genius:

Case A: The Positive Slope

Imagine we are on the right side of the valley.

Here, the slope is Positive (+).

To reach the bottom (minima), we need to move Left (decrease b).

The Math: bₙₑw = bₒₗd − (+slope).

Result: We subtract, so the value decreases. Correct Direction.

Case B: The Negative Slope

Imagine we are on the left side of the valley.

Here, the slope is Negative (-).

To reach the bottom, we need to move Right (increase b).

The Math: bₙₑw = bₒₗd − (−slope).

Result: The two negatives become a positive (b + slope). We add, so the value increases. Correct Direction.

Conclusion: The negative sign in the formula automatically adjusts our direction. We don't need if-else statements; the math naturally guides us toward the minima.

2. The Effect of Learning Rate (η)

If the direction is correct, why do we need a Learning Rate (η)?

The gradient tells us the direction and steepness, but sometimes the steepness is too high.

The "Zigzag" Problem

Suppose we are at a point where the Bias is -5 and the Gradient is extremely steep, say -50.

If we update without a learning rate (η = 1):

bₙₑw = −5 − (−50) = 45

We jumped from -5 all the way to 45! We overshot the minima completely.

Now, at 45, the slope is positive and steep (say, +50).

bₙₑw = 45 − (50) = −5

We are back where we started. The model enters a Zigzag motion, bouncing back and forth without ever reaching the bottom. This is why the model fails to converge.

The Fix: Small Steps

We introduce a small learning rate, say η = 0.01.

bₙₑw = −5 − (0.01 × −50)

bₙₑw = −5 − (−0.5) = −4.5

Instead of shooting to 45, we gently slide to -4.5. By taking small, controlled steps, we avoid overshooting and smoothly descend into the minima.

3. What is Convergence?

You will often hear the term "until convergence."

When we run Backpropagation loop for 100 or 1000 epochs, we are waiting for convergence.

Convergence happens when:

The gradient becomes incredibly small (close to 0).

Therefore, the update term becomes negligible.

wₙₑw ≈ wₒₗd

The Loss stops decreasing.

This indicates we have reached the bottom of the valley (the Global Minima). The model has learned as much as it can, and further training won't change the weights significantly.

Backpropagation Optimization: The Role of Memoization

What is Memoization?

In computing, Memoization is an optimization technique used to speed up programs by trading space for time.

The concept is simple: if a function involves expensive calculations, we store (cache) the result the first time we compute it. If the program encounters the same inputs again, it simply fetches the stored result rather than recalculating it from scratch. It is a "memory trick" that significantly reduces execution time.

The Challenge: Multiple Hidden Layers (MLP)

Why is this relevant to Neural Networks? Let's look at a scenario where we have two hidden layers instead of one.

The Architecture:

Input Layer (3 nodes)

Hidden Layer 1 (3 nodes)

Hidden Layer 2 (2 nodes)

Output Layer (1 node)

In a network like this (roughly 23 trainable parameters), calculating the derivative for Gradient Descent introduces a specific complexity: The Branching Path Problem.

The "Branching" Math

When we calculate the gradient for weights close to the output (like w₁₁³), the path is direct.

However, deeper in the network (e.g., the first layer weight w₁₁¹), the path splits.

w₁₁¹ influences node O₁₁.

Node O₁₁ connects to two nodes in the next layer: O₂₁ and O₂₂.

Both O₂₁ and O₂₂ travel separate paths to eventually affect the final prediction ŷ.

To find the correct derivative, we must account for the error flowing back through both paths.

The Multivariate Chain Rule:

In calculus, if variable x affects a function h through two intermediate variables f(x) and g(x), we sum the derivatives:

Applying this to our network, the gradient for our weight becomes a complex sum:

The Solution: Efficiency via Memoization

As you can see, the math above gets messy with just two hidden layers. Imagine a Deep Neural Network with 50 layers. If we recalculated these "branching paths" from scratch for every single weight, the computational cost would be astronomical.

This is where Memoization saves the day.

In Backpropagation, we compute gradients starting from the Output Layer and moving backward.

When we calculate the error gradients for Layer 3, we store them.

When we move back to Layer 2, we simply "reuse" the stored values from Layer 3.

We do not re-calculate the chain rule for the later layers; we just fetch the cached derivative.

This efficiency defines the algorithm. Backpropagation is not just "finding derivatives"—it is a smart combination of mathematics and computer science.

Backpropagation = Chain Rule (Logic) + Memoization (Efficiency)

By caching the derivatives as we move backward, we ensure that even massive Deep Learning models can be trained in a reasonable amount of time.